Editor’s Note: This is part four of an article titled “Analog Fetishes and Digital Futures” written by Mike Berk from a fascinating book, Modulations, a History of Electronic Music, Throbbing Words on Sound (2000). Republished on Headphone Commute with permission of the publisher, Caipirinha Productions / Cultures Of Resistance Network. Be sure to also read Everybody Loves a 303, and The Birth of Sampling.

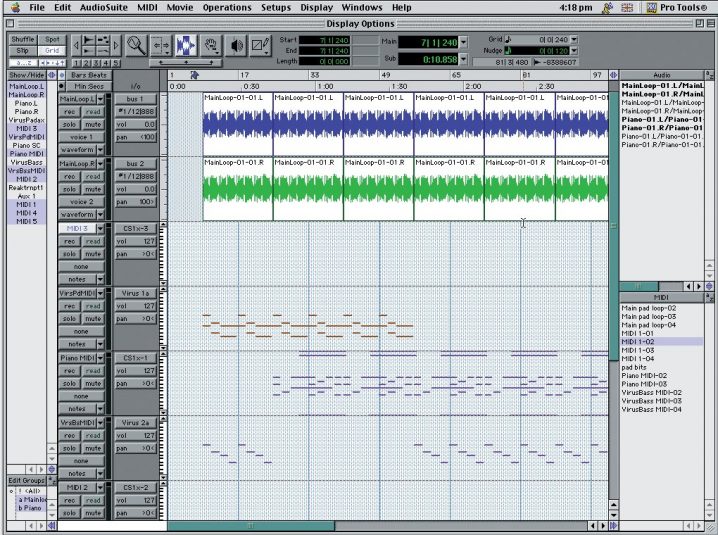

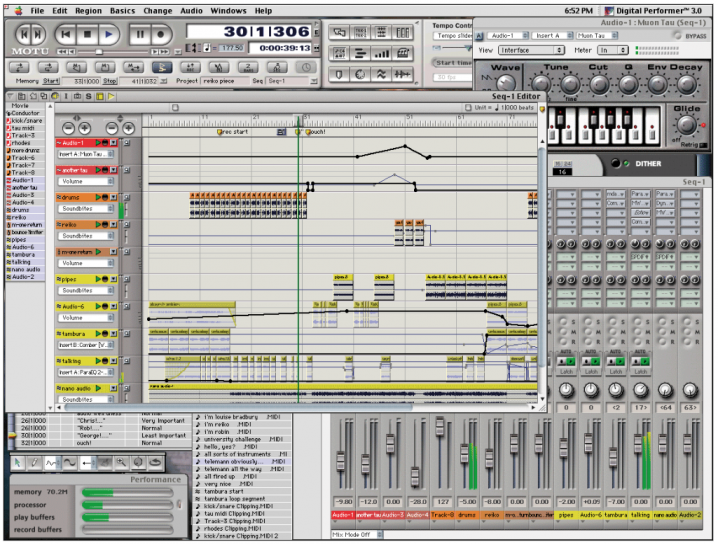

As computer-based digital audio workstations like Digidesign’s Pro Tools began to come down in price (driven by the massive advances in personal computer processing speeds in the mid-nineties), and as the major software sequencer developers began to add audio recording and sample editing functions to their already popular products, further innovations were on the way. MIDI, the communications protocol originally conceived as a way for a single keyboardist to control stacks of sound modules, found its real calling as the organizing principle of the computer music studio, linking software-based sequencers to the hardware universe of keyboard synths, sound modules, and samplers. Digital audio workstations (virtual multitrack recording studios) and sample editing software (stereo editors optimized for working with looped audio and featuring a wide variety of signal processing tools) brought a visual dimension to audio editing. Recorded tracks were displayed as graphical waveforms, making precise editorial work markedly simpler than it had been. Previously, trimming rhythmic loops had involved a fair amount of foresight and calculation: Graphical editing let producers make decisions on the fly and brought on, for better or for worse, a period of increasingly radical (or outlandish) editorial experimentation.

While word-processor-style cut-and-paste editing, complete with “undo” function, is digital recording’s most obvious advance over analog, less immediately apparent but no less important is digital’s liberation of pitch from duration. In analog recording, raising the pitch of a recorded snippet requires speeding up the tape and therefore shortening the length of the snippet. This becomes a real problem in multitrack recording because audio segments can’t be adjusted in pitch without ruining their synchronization with the rest of a project. Conversely, rhythmically offending segments can’t be sped up or slowed down without changing their pitch, leading to some potentially sticky harmonization problems.

Taking advantage of the fact that samplers and digital recorders stored audio as infinitely mutable data, engineers set about solving this problem. They came up with time-stretching, time-compresssing, and pitch-shifting algorithms that were provided as quick-repair tools for difficult mixes. Featured first on high-end professional samplers and recording workstations and aimed at postproduction professionals, these tools were meant to avoid the wholesale recording of entire tracks simply to fix a couple of flat notes or missed rhythmic cues. It became possible to compensate for inaccurate singers and tired drummers at mix-down without spending inordinate amounts of money on overdub sessions.

The producers who would go on to develop drum and bass pushed this technology in directions its engineers had never expected. Time-stretching, time-compression, and pitch-shifting were never meant to be foregrounded as audible effects, or even to be aesthetically pleasing. They were engineered to be as inaudible as possible in operation, all the better to convince listeners that their favored diva could really get up into that altissimo range. Drum and bass innovators like Rob Playford, Dego McFarlane, and Rob Haigh dispensed with the divas (well, at least for the most part), and gave those algorithms the spotlight. Inaugurating a veritable age of enlightenment for breakbeat science, then began experimenting with extreme and explicit pitch-shifting and time-stretching effects, transforming a few simple funk and fusion drum loops into an arsenal of humanly unplayable rhythmic motifs: snare-drum-ascending-a-staircase fills, blurry hyperspeed hi-hat grooves, and stomach-turning sub-bass kicks.

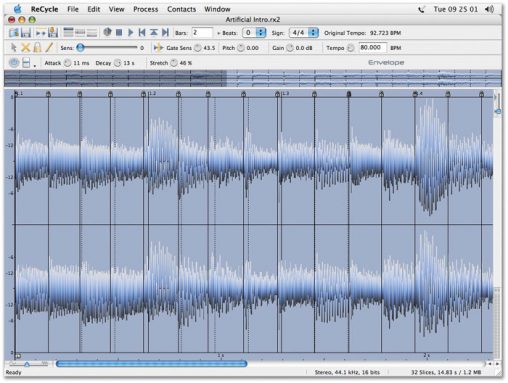

Much of their work depended on software sample editors like ReCycle, a software package developed by Swedish outfit Propellerhead Software (no, not those Propellerheads) and marketed by leading sequencer developer Steinberg. ReCycle analyzes a sampled drum loop that can then be chopped up into its component parts and reassembled, spliced, appended to itself, and otherwise mangled beyond recognition, all with greater speed, precision, and ease than could be accomplished using a hardware sampler alone. It enabled drum and bass producers to piece together their mutant percussion samples into continuously shifting polyrhythmic drumscapes. Pushed beyond their design parameters, pitch-shifting and time-compression operations produced digital noise that gave the drum sounds their characteristic hiss and crisp artificiality. While some popular breakbeats (the Winston’s “Amen,” for instance) have become clichés, radical digital mutations more often than not transform the source materials beyond easy recognition. Plenty of drum and bass records even employ the supposedly orthodox techno tones of 808s and 909s, but the results rarely resemble anything the Belleville Three might consider kosher.

At the end of the 1990s, the drum and bass community has drifted into a dangerous flirtation with traditional studio values, with producers waxing poetic about their Aphex Aural Exciters, SPL Vitalizers, and TC Electronic FInalizers (high-end psychoacoustic processors that impart a polished, professional sheen to finished mixes). While many drum and bass luminaries have flirted with the dubious pleasures of Yellowjackets and Spyro Gyra-informed fuzak, under this sort of studio pressure, a generalized Steely Dan glassiness threatens the genre itself.